I am now at the end of week 37 of my MA, and predictably my ideas have developed and changed since I wrote my original proposal in Week 8:

https://terencemquinn91.org/2016/10/19/ma-fine-art-digital-unit-1-project-proposal-original/.

This narrative is intended to give a fuller explanation to changes I have made to my recently updated Study Proposal:

https://terencemquinn91.org/2016/10/19/ma-fine-art-digital-unit-2-updated-project-proposal/.

Working Title:

Drawing from my pre-MA focus on life drawing, primarily with the female form, my working title was ‘How technological Innovation can provide new opportunities for the artistic presentation of the life model’.

Progress report for my Project Proposal of Week 8

The current status of each aim and objective is described below.

Aims and Objectives:

1. To research how artists’ use technology in their practice.

I have made substantial progress towards these ongoing aims and objectives.

a. The history of how artists have adopted new technology.

b. How contemporary artists have taken advantage of digital today, in particular in the presentation of the human figure.

Through reading, visiting art exhibitions and conversations with a wide range of stakeholders in the art world, I now have a significantly greater knowledge of the use of technology in art practice, but of course this objective is still ongoing. I am particularly drawn to the work of William Kentridge, Anthony Gormley, and Lorenzo Quinn.

Gormley and I at Crosby beach, Liverpool

William Kentridge at my visit to the Marion Goodman gallery, London

Lorenzo Quinn at the Venice biennale

Lorenzo Quinn at my visit to the Halcyon gallery, London

2. To research recent technology developments and how these might be employed in my own practice.

I have accomplished my aims and objectives with respect to making art works that are born-digital, when their final manifestation is physical.

a. The use of digital devices to further the model’s character in the artwork.

My first Year MA display included adding ‘voice’ through the use of proximity sensing and conductive materials employing the Bare Conductive Touch Board (an adapted Arduino). This gave the model a voice in two works, one a painting Unrequited love, and the other a sculptural work Metamorphosis. The latter was entered for the International 2016 Lumen Prize Award, but sadly did not make the long list.

Unrequited Love

Metamorphosis

Bare Conductive Arduino

I was lucky enough to have two tutorials about these works with Prof Stephen Farthing, UAL Chelsea. I received a lot of technical support from Digital Media, Wimbledon, CCW Digital (now the UAL Digital Maker Collective) at Chelsea, and from Ed Kelly at Camberwell. I was taught how to make a hollow bronze cast in the foundry at Camberwell. So I have been making considerable use of opportunities across the UAL colleges.

b. 3D Scanning and Printing

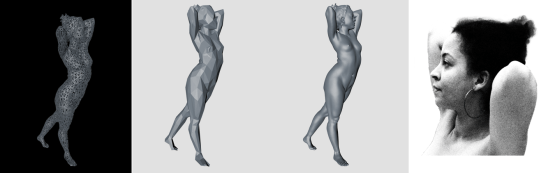

I acquired my own 3D scanner an Occipital Structure Sensor, which I used to create three pieces of work. Firstly, Vanessa, a metre-long work in 244 pieces of laser cut MDF, a contoured body, formed by taking a 3D scan and processing it in Autocad 123D Make, to produce the pieces which had to be assembled and glued together. This work was shown at the pop-up show at the end of our first term.

Secondly, the same scan was used as the basis for making the two sculptures in Metamorphosis. One was processed and printed in four parts (total 75cm tall) using 3D laser sintering in the Digital Fabrication Department of UAL Central Saint Martin’s (shown earlier). The other was the bronze bust made in the foundry at UAL Camberwell.

Thirdly, the 3D scan was morphed in various ways using Autocad 123D Make, ZBrush and Photoshop. Four different images were digitally printed on canvas using the Digital Media Department at UAL Camberwell. These four canvasses together make the 3m x 75cm artwork Perspectives of Vanessa. Possibly the first image above will be projected on to the canvas, so that it can be seen rotating in 3D. I have already made this video and digitally printed a blank canvas with the same background, ready to do this.

I have not yet made any art works that are born-digital where the final manifestation is entirely digital. I have, however, made considerable progress in preparing for doing so.

c. 3D drawing and display including holography.

I have acquired a Wacom Intuos Pro Pen Tablet, which will enable me to draw in 2D. However, with the recent availability of Google Tilt-Brush for Vive Virtual Reality, I will draw in 3D instead. I am awaiting delivery of a 3D Holographic Display.

3D holographic display unit ordered from Holus+ Technology in Canada

At the V&A Digital Design exhibition in September, I saw and discovered that you can upload a 3D file in Sketchfab and view it in a VR Google Cardboard headset or equivalent. You can also record a still or video background using a 2D 360 degree camera, such as that now available at the Digital Maker Collective at Chelsea.

I also discovered that you can record video in 3D 360 degrees with a recently announced YUZE camera, which will be released at the VR & AR World Show at Excel on 19/20 October. I have obtained an entry ticket and will consider buying this device. It is a similar price to the VIVE. However, I will defer doing so until I have seen the 3D Augmented Reality Video recording facility used by Double Me at Ravensbourne Virtual Reality research centre.

Both the uploaded file and the video background will be able to be viewed on the holographic 360 degree display.

d. 3D Painting in Virtual Reality.

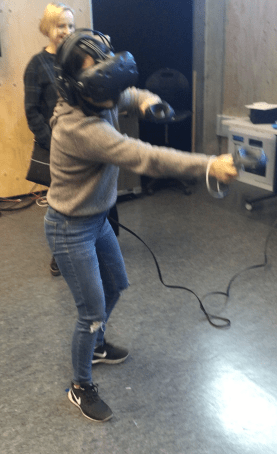

I have investigated drawing in 3D in Virtual Reality using the Vive VR Headset and Google Tilt Brush software. The Digital Maker Collective at Chelsea now have both. I tried them out at the Edinburgh Festival this summer, and at a recent meeting in the 4D studios at CSM, when I also installed Tilt Brush on my laptop. In so doing I discovered that in order to be able to independently use this software and hardware effectively, I would need to acquire a top of the range graphics card extension (GTX 1070) to my MacBook Pro, as well as the VIVE: a very expensive option. I will therefore have to make the painting at either Chelsea or CSM. However, once made, I hope to view the painting in VR using my own, significantly cheaper equipment.

I discovered a process that will help me do this. A recent release of Tilt Brush allows a 3D file to be uploaded to it, which could possibly be my 3D scanned image of Vanessa. This imported scan can then be used as a 3D template for the painting, or an object within the painting.

http://vrscout.com/news/tilt-brush-adds-rotate-resize-import-3d-models-pictionary/.

Afterwards the painting can be uploaded to the Cloud, and downloaded to the iPhone to be viewed on a much cheaper VR headset.

I now have two relatively inexpensive Bobovar VR headsets, having ordered them after trying them out at the Lumen Prize at the end of September. I now need to identify a tracking device, possibly the Kinect, that can (with software) communicate with my iPhone in this headset. I have borrowed a Kinect from the Digital Maker Collective in order to conduct a test, and have ordered a book to hopefully explain how this can be done. This experiment will determine whether it is possible to view, walk around and into an already created painting in 3D and Virtual Reality, without further need of the VIVE/Tilt Brush technology.

GTX 970 attached to MacBook Pro

Vive at CSM 4D Studio

3D painting in VR using Google Tilt Brush

My VR Headsets

Kinect from UAL Digital Maker Collective

Acquired book – I may need to program in C++

e. 3D Video Recording in Virtual Reality

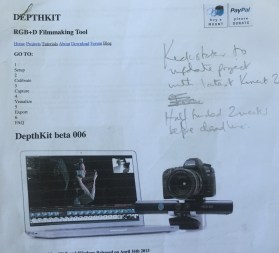

I have investigated this emerging technology, as well as 3D video recording in Augmented Reality. I discovered a paper on the subject associated with a Kickstarter project (which has since been removed from the Internet). This spurred my interest to see whether this technology was possible.

DepthKit 3D VR Film making Tool

Double Me VR video recording in real time

I have since seen a demonstration of a similar technology (but it could be a developed version of the same thing) from Double Me, a company set up to research and provide these services http://www.doubleme.me/technology/.They are currently next door to Ravensbourne and are working alongside their Virtual Reality lab, using Ravensbourne’s seven Microsoft Hololens AR headsets imported from the USA. I viewed a ballerina dancing using the Hololens at the recent 2016 Lumen Prize giving. This was of substantially better quality than shown on Double Me’s website videos. Their CEO Albert Kim invited me to record up to a 10-minute VR video in his studio, and suggested I do this after they substantially upgrade their recording quality from 2K to 4K this November. http://www.doubleme.me/new-doubleme-promo-video-by-carl-white/

Microsoft Hololens

Double Me are working on a project with the Royal Opera House to make a VR recording of an opera and show it there on a Holographic Display (being designed in-house and planned to be built in China).

f. 3D Animation of the drawn and painted figure

I have researched the tools I need. So far I have used Cinema4D/Bodypaint4D and ZBrush but only for exporting and rendering 3D scanned images to make the artwork I have already made. Additionally, as already explained, I have acquired hardware and software, namely a Wacom tablet for drawing, and Poser11, DazStudio, and iClone6 for creating 3D human characters and for animation. They are all installed on my Macbook Pro, but so far I have seen what they can do, but have yet to use them.

The software provides shortcuts to sculpting and animating the human figure and include male and female examples that can be altered using filters, with example animations that can be overlaid on the figure.

The resulting drawings, characters and animations can be viewed through the Hololens in AR or holographically, as previously described. To be viewed in VR they need to be imported into the software ‘Unity’, about which I know little, but can get help through the Digital Maker Collective, or possibly LCC.

g. Viewer interaction with the presented image

This interaction can be seen as an extension of any of the previously described projects. So far I have experimented with Arduino, proximity sensing, and audio. I have also experimented with Leap Motion, and will shortly extend this to the Microsoft Kinect. The animation software iClone, described earlier, is capable of linking to the latter to track human movement, which can be mirrored to create a 3D animation, or enable a viewer to replicate their own movements in the animation in real time.

3.To use this research to contextualise own practice

The contextualisation of my practice with those of other artists is an ongoing activity. It is informed by my research, my involvement in collaborative art projects, and by attending and participating in art exhibitions.

My sole experience of a collaborative project to date is a video/audio work entitled ‘Mother Earth’, made during the low residency earlier this year. Our group collaboratively decided on the project and how it should be constructed. My main contribution was the audio which I made with my wife Suzy, and edited using Audacity software

Mother Earth

I participated in a week long pop-up show at UAL Chelsea, organised by the UAL Digital Maker Collective of which I am an active member. There I demonstrated use of the scanner and working with Arduino, sensors, and conductive materials. I will be doing the same at Mozfest 2016 at Ravensbourne at the end of October, and at the Tate Exchange, where the Digital Maker Collective will take over the whole of the fifth floor of the Switch House extension to Tate Modern, in February/March 2017. Both events will be attended by the general public.

To produce distinctive artworks to support my research

This is largely covered by what I have already described. No doubt my ideas will develop over the rest of my MA, but I am now confident that I can achieve this objective both for material and immaterial born-digital artworks.

Key Factors influencing changes to my Project Proposal

I have reflected long and hard about the research and making I have done to date, and will be updating my Project Proposal accordingly. I feel that only building on life drawing, largely of the female form, is too limiting.

This is best illustrated by my experience developing ‘Metamorphosis’. Prof Stephen Farthing was rather excited at the idea of giving sculptures a voice, and envisaged an exhibition with a great variety of works. What would a AK47 weapon say? Or a pineapple? When introducing the concept of touch to initiate narratives, issues also arose about touching a sculpture of a naked female. This resulted in me changing direction and initiating Vanessa’s narrative by picking up a book with the same title as the Artwork.

Consequently, I now feel the need to broaden my agenda to the human figure, clothed as well as nude, male as well as female, and engaged in human activity, most probably dance.

My working title is now:

‘How technological innovation can provide new opportunities for the artistic presentation of the human form’.

My aims and objectives have thus also broadened:

‘To explore how far digital methods can extend the artistic presentation of the human form, and to demonstrate this by producing distinctive and differentiated artworks in both material and immaterial form’.

Making projects going forward

Until now my focus has been researching and making born-digital artworks where the final manifestation is in a material form, which I have termed ‘digital to physical’. I plan continue with my plan to divide the second year of my MA in two parts, first focusing on artworks where the final manifestation is in an immaterial form, which I refer to as ‘purely digital’, and second, using a mix of physical and purely digital to create my MA Show exhibit.

However, my work to date has led to my interest in applying for the UAL Chelsea Foundry Fellowship, and to do this I need to continue make further sculptures in the foundry at UAL Camberwell. Consequently, the material element of my MA Show exhibit will need to be another casting along the lines of my discussion with Tom and Richard who head up the foundry at UAL Chelsea, and who are the decision makers for the Foundry Fellowship.

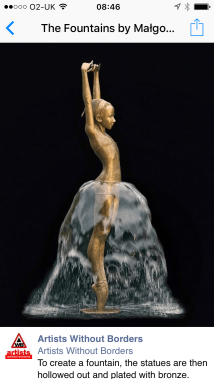

After talking about my practice to date and showing pictures of my work, both Tom and Richard seemed keen. They are now sending me an application form, and would like me to keep them informed about my future work. The Fellowship is highly competitive. It is for 6 months, and for me, could start immediately following the completion of my MA. I expressed an interest in blending digital scanning and printing with casting by directly making the mould in castable PLA (with runners and riders attached and I have identified where I can do this). Also, that I was interested in producing a life sized bronze cast of the human body, and a morphed face, similar to the pictures below.

Sculpture ideas based on my existing wax and 3-D print life sized in bronze

Sculpture ideas based on the example of others

To achieve this objective, I must continue making digital to physical sculptures of the human form, using 3D scanning, photogrammetry, 3D image manipulation, metal casting and if possible bronze resin sculpting. In November, in order to make a self-portrait 3-D sculpture I am being scanned in the Veronica Scanner, a photogrammetry device used in a recent exhibition at the Royal Academy of Arts.

https://terencemquinn91.org/2016/10/24/veronica-scanner-i-am-the-model/

The comparison between born-digital artworks in material and immaterial forms has been explored in my Research Paper ‘Digital to Physical – Made to last’? Which addresses the question ‘Will functioning digital art be part of our future cultural heritage?’. This paper is having a huge influence on the decisions I will make about my art practice going forward after my MA. It has provoked an interest in undertaking a practice based PhD, to explore the use of emerging technologies to address the issue of conserving complex digital art installations. I have attended a PhD introduction event at CSM, have booked another at UAL Chelsea, and am going to a TECHNE open evening to learn about funding opportunities. Again this will influence the immaterial work I produce going forward during my MA.

My first idea for an immaterial artwork is to collaborate with Aurelie Freoua, a recent MA Fine Art Digital graduate and painter. The aim is to produce a 3D painting in Tilt Brush which the viewer can see, walk around and through, in Virtual Reality. A real life 2D large scale image of the painting, as the Virtual Reality viewer would first see it, will be projected on to a doorway. This is the image that the viewer will see as they put on the headset. They walk through the door and into the same painting in Virtual Reality. Aurelie will create the painting in Tilt Brush and I will create the environment to make and display it in VR. My plan is to make the VR painting using the VIVE of either UAL Digital Art Collective, or the 4D studio at CSM. I then hope to be able to view the painting in 3D and VR in my own much cheaper set-up as previously described. I will thus need to check out this possibility first.

My second idea is to make a collaborative work with my current life model and dancer, Vanessa Abreu. This will show 3D footage of Vanessa dancing in either AR or holographically. If used for my MA Show, I envisage a live performance alongside, say the holographic display, which would also be projected on to a large screen.

The typical work I am aiming to conserve for a PhD will involve viewer interaction, I therefore need to understand the mechanisms for creating such interactions. Consequently, I will need to extend my use of the Bare Conductive Arduino Touch Board, by using the recently released PiCap, to connect a Raspberry Pi3. For the same reason, I need to better understand Processing and Max MSP to create programs for interactive artworks. This will enable me to experiment with alternative sensors triggering visual art beyond the capability of the Arduino such as Video and Internet connection. I hope to use the proposed collaborative project with Romain Meunier, MA Visual Arts Resident Artist for the current academic year, as a vehicle for this learning experience. Additionally, I asked Alex May to participate in our Tate Exchange event and he will be putting forward a proposal. Again this can be another learning opportunity for me.

I am also thinking of organising a symposium on the conservation of digital art, and am already engaged in conversation with several possible participants, including Douglas Dodds, the curator of the digital art collection at the V&A, with whom I discussed this possibility at the Lumen Prize.

These activities will equip me to undertake both the Foundry Scholarship and a practised based PhD.

To be practical about the upcoming pop up show at the end of this term, I aim not to create any new work, but to show some of my digital to physical art not exhibited so far.

Perspectives of Vanessa

These four canvasses together make the 3m x 75cm artwork ‘Perspectives of Vanessa’ to be shown at the Pop-Up exhibition at the end of Unit 1. Possibly the first image above will be projected on to the canvas, so that it can be seen rotating in 3D. I have already made this video, just in case.

Wherever I am with all these activities, and in order to concentrate on making for my final MA Show exhibit, I will stop all further practice based research around the time of the next Low Residency and the Tate Exchange in Feb/March next year. I will then also decide whether to apply for a PhD, as applications need to be in by the end of April, in order to begin at the start of the next academic year.

Pingback: MA Fine Art Digital Unit 2 – Updated Project Proposal | terencemquinn91

Pingback: Unit 1 Assessment | terencemquinn91